10 Methods To keep Your Deepseek Rising With out Burning The Midnight …

페이지 정보

본문

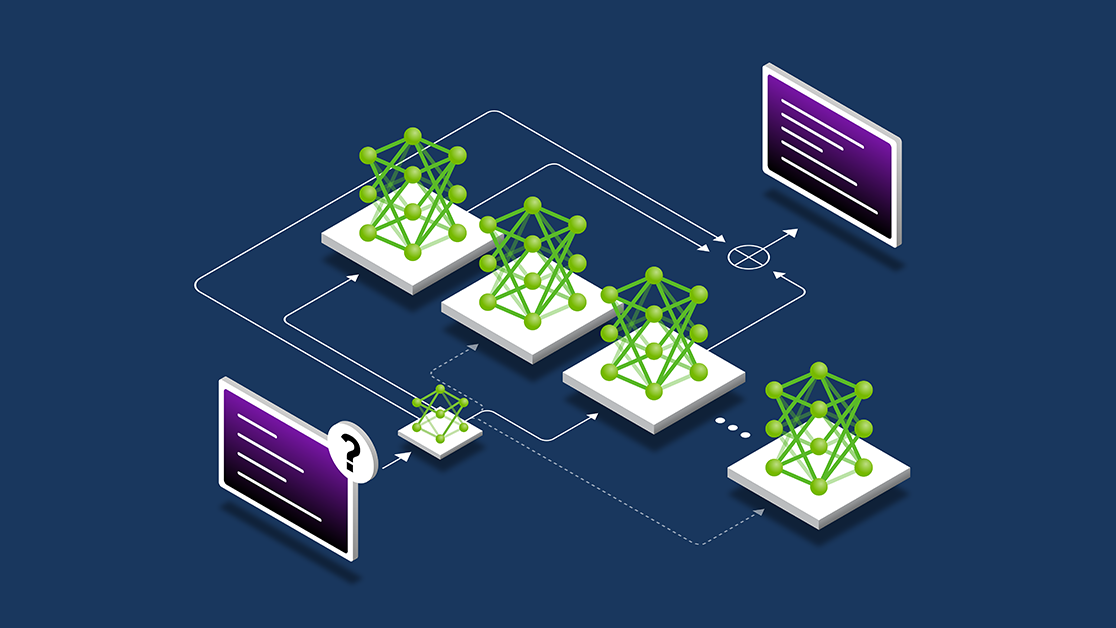

This repo accommodates GGUF format model recordsdata for Free Deepseek Online chat's Deepseek Coder 33B Instruct. That JSON consists of full copies of all of the responses, base64 encoded if they're binary recordsdata corresponding to photos. In this sense, the whale emblem checks out; that is an industry stuffed with Ahabs. Discusses DeepSeek's impact on the AI business and its challenge to conventional tech giants. In 2023, President Xi Jinping summarized the culmination of these economic policies in a name for "new quality productive forces." In 2024, the Chinese Ministry of Industry and data Technology issued an inventory in of "future industries" to be targeted. There are no public reports of Chinese officials harnessing DeepSeek for personal info on U.S. However, there are a few potential limitations and areas for additional analysis that might be thought-about. However, the paper acknowledges some potential limitations of the benchmark. Considered one of the most important limitations on inference is the sheer quantity of reminiscence required: you each have to load the mannequin into reminiscence and in addition load the entire context window. One is more aligned with Free DeepSeek online-market and liberal ideas, and the opposite is extra aligned with egalitarian and professional-authorities values. R1 and o1 specialize in breaking down requests into a chain of logical "ideas" and inspecting every one individually.

This repo accommodates GGUF format model recordsdata for Free Deepseek Online chat's Deepseek Coder 33B Instruct. That JSON consists of full copies of all of the responses, base64 encoded if they're binary recordsdata corresponding to photos. In this sense, the whale emblem checks out; that is an industry stuffed with Ahabs. Discusses DeepSeek's impact on the AI business and its challenge to conventional tech giants. In 2023, President Xi Jinping summarized the culmination of these economic policies in a name for "new quality productive forces." In 2024, the Chinese Ministry of Industry and data Technology issued an inventory in of "future industries" to be targeted. There are no public reports of Chinese officials harnessing DeepSeek for personal info on U.S. However, there are a few potential limitations and areas for additional analysis that might be thought-about. However, the paper acknowledges some potential limitations of the benchmark. Considered one of the most important limitations on inference is the sheer quantity of reminiscence required: you each have to load the mannequin into reminiscence and in addition load the entire context window. One is more aligned with Free DeepSeek online-market and liberal ideas, and the opposite is extra aligned with egalitarian and professional-authorities values. R1 and o1 specialize in breaking down requests into a chain of logical "ideas" and inspecting every one individually.

Early post-market analysis uncovered a crucial flaw: DeepSeek lacks ample safeguards in opposition to malicious requests. Take some time to familiarize your self with the documentation to grasp learn how to construct API requests and handle the responses. The benchmark entails artificial API function updates paired with programming duties that require using the up to date performance, difficult the mannequin to purpose concerning the semantic adjustments relatively than just reproducing syntax. Flux, SDXL, and the other fashions aren't constructed for those tasks. This research represents a significant step ahead in the sphere of large language fashions for mathematical reasoning, and it has the potential to impact various domains that depend on superior mathematical expertise, comparable to scientific analysis, engineering, and education. The analysis represents an important step forward in the ongoing efforts to develop massive language models that may effectively sort out complicated mathematical problems and reasoning duties. Additionally, the paper does not address the potential generalization of the GRPO technique to different forms of reasoning tasks beyond arithmetic.

Early post-market analysis uncovered a crucial flaw: DeepSeek lacks ample safeguards in opposition to malicious requests. Take some time to familiarize your self with the documentation to grasp learn how to construct API requests and handle the responses. The benchmark entails artificial API function updates paired with programming duties that require using the up to date performance, difficult the mannequin to purpose concerning the semantic adjustments relatively than just reproducing syntax. Flux, SDXL, and the other fashions aren't constructed for those tasks. This research represents a significant step ahead in the sphere of large language fashions for mathematical reasoning, and it has the potential to impact various domains that depend on superior mathematical expertise, comparable to scientific analysis, engineering, and education. The analysis represents an important step forward in the ongoing efforts to develop massive language models that may effectively sort out complicated mathematical problems and reasoning duties. Additionally, the paper does not address the potential generalization of the GRPO technique to different forms of reasoning tasks beyond arithmetic.

First, the paper doesn't provide a detailed analysis of the forms of mathematical problems or ideas that DeepSeekMath 7B excels or struggles with. First, they gathered a massive quantity of math-related data from the online, together with 120B math-related tokens from Common Crawl. First, they fine-tuned the DeepSeekMath-Base 7B model on a small dataset of formal math problems and their Lean 4 definitions to acquire the preliminary model of DeepSeek-Prover, their LLM for proving theorems. A model of this story was also revealed within the Vox Technology newsletter. Why it issues: Congress has struggled to navigate the safety and administrative challenges posed by the fast development of AI expertise. Deepseek R1 prioritizes security with: • End-to-End Encryption: Chats remain non-public and protected. Is Free DeepSeek Chat detectable? In API benchmark tests, Deepseek scored 15% higher than its nearest competitor in API error dealing with and effectivity. For instance, the synthetic nature of the API updates may not absolutely capture the complexities of real-world code library modifications. Overall, the CodeUpdateArena benchmark represents an necessary contribution to the continued efforts to improve the code technology capabilities of large language fashions and make them extra strong to the evolving nature of software program growth.

Mathematical reasoning is a major challenge for language models due to the complex and structured nature of mathematics. The paper introduces DeepSeekMath 7B, a large language model trained on an enormous quantity of math-associated knowledge to improve its mathematical reasoning capabilities. Despite these potential areas for further exploration, the general method and the results offered in the paper characterize a big step forward in the field of large language models for mathematical reasoning. As the sphere of giant language fashions for mathematical reasoning continues to evolve, the insights and strategies offered in this paper are prone to inspire further developments and contribute to the event of even more capable and versatile mathematical AI methods. The paper introduces DeepSeekMath 7B, a large language mannequin that has been specifically designed and trained to excel at mathematical reasoning. The paper introduces DeepSeekMath 7B, a large language mannequin that has been pre-trained on a large quantity of math-related information from Common Crawl, totaling one hundred twenty billion tokens. This paper presents a brand new benchmark called CodeUpdateArena to guage how nicely giant language models (LLMs) can update their information about evolving code APIs, a important limitation of present approaches. The CodeUpdateArena benchmark represents an necessary step ahead in evaluating the capabilities of large language models (LLMs) to handle evolving code APIs, a critical limitation of current approaches.

Should you beloved this article as well as you desire to be given more information about Deepseek AI Online chat kindly stop by the web-page.

- 이전글Do not get Too Excited. You Might not be Finished With Daycare Near Me - Find The Best Daycares Near You 25.02.20

- 다음글How To Play Blackjack In An Online Casino 25.02.20

댓글목록

등록된 댓글이 없습니다.

02.6010.5010

02.6010.5010